Virtual Memory

Page tables, TLBs, and the elegant abstraction that gives every process its own address space.

Updated March 3, 2026

Why Virtual Memory?

Every process believes it owns the entire address space. The Operating System and Memory Management Unit collaborate to map virtual pages to physical frames, providing:

- Isolation — process A can’t touch process B’s memory.

- Overcommit — total virtual memory can exceed physical RAM.

- Convenience — contiguous virtual ranges backed by scattered physical frames.

Virtual memory is both a hardware and software concept. The MMU and OS kernel each own distinct responsibilities.

All CPU architectures have a MMU:

- x86-64

- ARM64

- PowerPC

- MIPS

- RISC-V

| MMU / CPU (hardware) | OS Kernel (software) |

|---|---|

| Address translation per access | Creating/destroying page tables |

| TLB lookup and caching | Physical frame allocation |

| Hardware page-table walk | Page fault handling (alloc, swap in) |

| Permission enforcement (R/W/X) | Setting permission bits |

| Raising page faults | Swap space and eviction policy |

| Page-table format and page sizes (ISA-fixed) | Address space layout (mmap, brk) |

| MMU / CPU (hardware) | OS Kernel (software) |

|---|---|

| Which pages are mapped | Translation speed |

| Which physical frames to use | TLB replacement policy |

| Swap or eviction policy | Page-table walk implementation |

| Per-process address space layout | Supported page sizes (ISA-fixed) |

Page Tables

x86-64 Page Tables

A 4-level radix tree (on x86-64) translates 48-bit virtual addresses to physical. Each level indexes 9 bits, with the final level pointing to a 4 KB page frame.

Virtual Address (48-bit)

┌────────┬────────┬────────┬────────┬─────────────┐

│ PML4 │ PDPT │ PD │ PT │ Offset │

│ 9 bits │ 9 bits │ 9 bits │ 9 bits │ 12 bits │

└────────┴────────┴────────┴────────┴─────────────┘- PML4 - Page Map Level 4

- PDPT - Page Directory Pointer Table

- PD - Page Directory

- PT - Page Table

- Offset - 12 bits

Example of x86-64 Page Table Walk

Translate virtual address 0x00007F4AB3C1D000 to a physical address:

Step 1: Split the 48-bit address into four 9-bit indices and a 12-bit offset:

Step 2: Walk the four levels, using each index to look up the next table base:

- Read CR3 → PML4 base address (

0x1A3000). - PML4[254] → read 8 bytes at

0x1A3000 + 254×8. Next base:0x3F5000. - PDPT[298] → read 8 bytes at

0x3F5000 + 298×8. Next base:0x7A2000. - PD[414] → read 8 bytes at

0x7A2000 + 414×8. Next base:0x1B8000. - PT[285] → read 8 bytes at

0x1B8000 + 285×8. Physical frame:0xC40000. - Result:

0xC40000 + 0x000=0xC40000.

Each table is exactly one page (512 entries × 8 bytes = 4 KB). The full walk costs 4 memory accesses (~200 cycles) — which is why the TLB exists.

TLB: Translation Lookaside Buffer

Walking four levels of page tables on every access would take too long. The Translation Lookaside Buffer caches recent translations — typically 64–1536 entries with a >99 % hit rate for well-behaved workloads.

TLB misses are expensive (~20–100 cycles). Huge pages (2 MB / 1 GB) reduce TLB pressure by covering more memory per entry.

Example of a TLB

- This simple TLB has 16 entries.

- Assume 2^10 = 1024 pages are mapped.

- Page numbers are 10 bits

- Index into the TLB with 4 bits (lets randomly pick bits 8 5 3 0)

Questions

- Why is the TLB not a simple array?

- How does the TLB know which process the page belongs to?

- How does the TLB know the physical address is not for a different process?

Answers

- The TLB is not a simple array due to the trade off between size and the number of possible entries the TLB can have. If the TLB was a simple array, it would need to the size of the virtual address space.

- The TLB store the process ID along with the page table entry.

- Since the TLB already has the process ID, once the virtual address if confirmed to be the same process, the physical address is unique.

Demand Paging & Page Faults

Pages start unmapped. On first access the CPU raises a page fault; the OS allocates a frame, zeroes it, updates the page table, and resumes execution. This lazy strategy avoids wasting RAM on pages that are never touched.

Practical tip: For latency-sensitive applications, use

mlock()/MAP_POPULATEto fault pages in ahead of time.

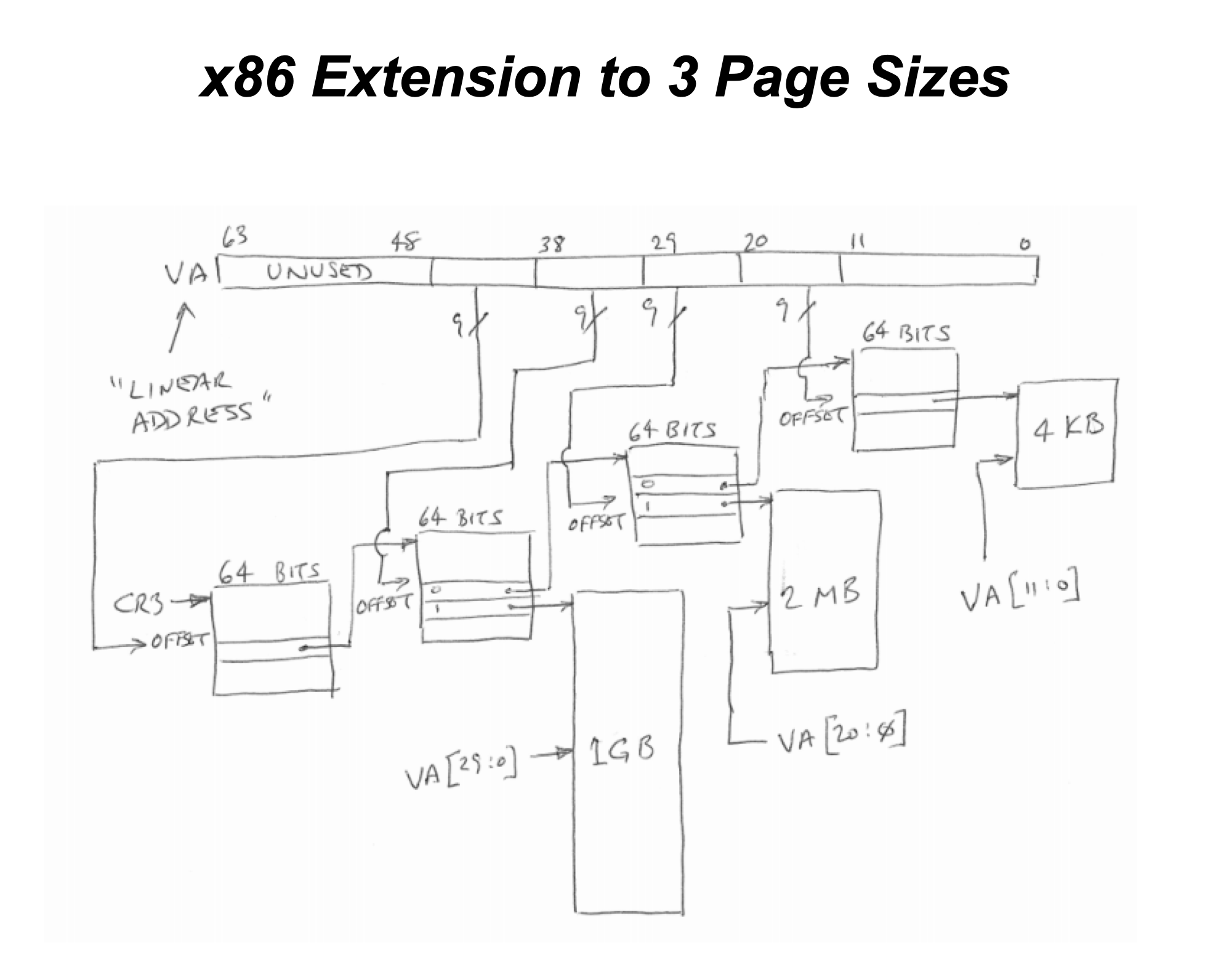

x86 Huge Pages

Huge pages (2 MB / 1 GB) reduce TLB pressure by covering more memory per entry. This is especially useful for latency-sensitive applications. When a block of memory is expected to be accessed sequentially, having a larger mapping will increase the chances of a TLB hit by increasing the size of the mapping.

How Huge Pages Change the Walk

The PS (Page Size) bit in a page table entry tells the MMU to stop walking early. The remaining address bits that would have indexed deeper tables instead become part of a larger offset into a bigger physical frame.

Using the same virtual address 0x00007F4AB3C1D000 from the earlier example:

4 KB — 4-Level Walk (standard)

┌────────┬────────┬────────┬────────┬─────────────┐

│ PML4 │ PDPT │ PD │ PT │ Offset │

│ 9 bits │ 9 bits │ 9 bits │ 9 bits │ 12 bits │

│ 254 │ 298 │ 414 │ 29 │ 0x000 │

└───┬────┴───┬────┴───┬────┴───┬────┴─────────────┘

▼ ▼ ▼ ▼

CR3 → PML4 → PDPT → PD → PT → 4 KB frame- CR3 → PML4 base (

0x1A3000) - PML4[254] → PDPT base (

0x3F5000) - PDPT[298] → PD base (

0x7A2000) - PD[414] → PT base (

0x1B8000) - PT[29] → physical frame (

0xC40000) - Result:

0xC40000 + 0x000=0xC40000

4 memory accesses.

2 MB — 3-Level Walk (PS=1 in PD entry)

The PT level is eliminated. The PD entry has PS=1, so the walk stops and bits [20:0] become a 21-bit offset into a 2 MB frame.

┌────────┬────────┬────────┬─────────────────────────┐

│ PML4 │ PDPT │ PD │ Offset │

│ 9 bits │ 9 bits │ 9 bits │ 21 bits │

│ 254 │ 298 │ 414 │ 0x1D000 │

└───┬────┴───┬────┴───┬────┴─────────────────────────┘

▼ ▼ ▼

CR3 → PML4 → PDPT → PD(PS=1) → 2 MB frame- CR3 → PML4 base (

0x1A3000) - PML4[254] → PDPT base (

0x3F5000) - PDPT[298] → PD base (

0x7A2000) - PD[414], PS=1 → physical frame (

0xA00000) - Result:

0xA00000 + 0x1D000=0xA1D000

3 memory accesses — one fewer than 4 KB. The PT index (29) and offset (0x000) from the 4 KB walk are merged into the 21-bit offset 0x1D000.

1 GB — 2-Level Walk (PS=1 in PDPT entry)

Both PD and PT levels are eliminated. The PDPT entry has PS=1, so the walk stops and bits [29:0] become a 30-bit offset into a 1 GB frame.

┌────────┬────────┬──────────────────────────────────┐

│ PML4 │ PDPT │ Offset │

│ 9 bits │ 9 bits │ 30 bits │

│ 254 │ 298 │ 0x33C1D000 │

└───┬────┴───┬────┴──────────────────────────────────┘

▼ ▼

CR3 → PML4 → PDPT(PS=1) → 1 GB frame- CR3 → PML4 base (

0x1A3000) - PML4[254] → PDPT base (

0x3F5000) - PDPT[298], PS=1 → physical frame (

0x80000000) - Result:

0x80000000 + 0x33C1D000=0xB3C1D000

2 memory accesses — two fewer than 4 KB. The PD index (414), PT index (29), and offset (0x000) from the 4 KB walk are merged into the 30-bit offset 0x33C1D000.

Summary

| Page Size | Levels Walked | Memory Accesses | Offset Bits | TLB Entries to Cover 1 GB |

|---|---|---|---|---|

| 4 KB | 4 (PML4→PT) | 4 | 12 | 262,144 |

| 2 MB | 3 (PML4→PD) | 3 | 21 | 512 |

| 1 GB | 2 (PML4→PDPT) | 2 | 30 | 1 |

Fewer walks and fewer TLB entries needed — but huge pages must be physically contiguous and waste memory if only partially used.

Questions

- What data access pattern is the most beneficial to use huge pages?

- What is the benefit of using huge pages when combining with the TLB?

Answers

- The most beneficial data access pattern to use huge pages is a sequential access pattern. Since the TLB is a cache, the more sequential the access pattern, the more likely the TLB will hit.

- The benefit of using huge pages when combining with the TLB is that the huge page will be a single entry in the TLB, which will reduce the number of TLB misses.